ECEN-631 Robotic Vision

Instructor: Dr. D. J. Lee

Department of Electrical and Computer Engineering

450B Engineering Building

Email: djlee@byu.edu

Tel: (801) 422-5923

Course Overview:

Human vision is natural and seems to be able to process visual information effortlessly. Human vision is able to detect, localize, and recognize specific objects. Human vision is able to perceive and understand surrounding 3-D world and use the 3-D information to accomplish many complicated tasks. However, computer mimicry of human vision is difficult and often impossible. The main properties of 3-D world are geometric and dynamic. The objectives of 3-D computer vision are to compute these properties from one or multiple digital images and to use them to mimic human vision. Basic image processing and feature detection techniques, camera models, geometry, and calibration, and geometrical models of single, two, and multiple view systems are three components of 3-D computer vision and they will be studied in this course.

The primary purpose of this course is not to give an exhaustive review of image processing techniques, but to cover methods commonly found in 3-D vision such as dealing with image noise, feature extraction, 3-D object representation and image matching. A few mathematical concepts that are crucial to understanding of solutions and algorithms will be reviewed to the level of detail necessary to understand the material covered in this course. Computer vision, like other engineering disciplines, has to ground itself in mathematics to study or solve its problems. For each problem analyzed in this course, a theoretical treatment of the problem and the mathematical model will be shown and algorithms to the solutions of the problem will be derived. The practical applicability of the 2-D and 3-D vision algorithms will also be discussed as case studies.

Prerequisites:

This course requires extensive software programming work. Students do not have interest or extensive experience in programming should not take this course. Students lack of skills or background in the following areas may be at a disadvantage completing course assignments.

– C/C++ and Python programming experience

– Linux or Windows with Visual Studio

– Math and signal processing (ECEn 380)

– Computer Systems (ECEn 424)

– Real-Time Operating Systems (ECEn 425)

– Embedded Systems (ECEn 427)

Course Objectives:

Six main objectives of this course are

– Learn machine vision system design and applications

– Understand camera geometry and calibration

– Learn feature detection and tracking

– Extract 3-D information from single, two, and multiple views

– Estimate camera and object motion

References:

There is no textbook selected for this course. The following books are good references.

Machine Vision, 3rd Edition

E.R. Davies Elsevier, 2005

Introductory Techniques for 3-D Computer Vision

Emanuele Trucco & Alessandro Verri Prentice Hall, 1998

An Invitation to 3-D Vision - From Images to Geometric Models

Yi Ma, Stefano Soatto, Jana Kosecka, & Shankar Sastry Springer-Verlag, 2004

Multiple View Geometry in Computer Vision

Richard Hartley & Andrew Zisserman Cambridge University Press, 2003

An Introduction to 3D Computer Vision Techniques and Algorithms

Boguslaw Cyganek & J. Paul Siebert Wiley, 2009

3D Computer Vision: Efficient Methods and Applications

Christian Wšhler Springer-Verlag, 2009

Grading:

– Eight homework assignments (45%): based on individual performance

– Three team class projects: based on team and individual performance

o Visual Inspection (5%)

o Base ball catcher (10%)

o Vision APP or selected project (15%)

– One final team project (25%)

>= 93: A >= 90: A- >= 85: B+ >= 80: B >= 75: B- >= 70 : C >= 60 : D <60 : E

Equal Opportunity Statements

Preventing Sexual Harassment

Title IX of the Education Amendments of 1972 prohibits sex discrimination against any participant in an educational program or activity that receives federal funds. The act is intended to eliminate sex discrimination in education. Title IX covers discrimination in programs, admissions, activities, and student-to-student sexual harassment. BYU's policy against sexual harassment extends not only to employees of the university but to students as well. If you encounter unlawful sexual harassment or gender based discrimination, please talk to your professor; contact the Equal Employment Office at 378-5895 or 367-5689 (24 hours); or contact the Honor Code Office at 378-2847.

Student With Disabilities

Brigham Young University is committed to providing a working and learning atmosphere which reasonably accommodates qualified persons with disabilities. If you have any disability which may impair your ability to complete this course successfully, please contact the Services for Students with Disabilities Office at 378-2767. Reasonable academic accommodations are reviewed for all students who have qualified documented disabilities. Services are coordinated with the student with the instructor by the SSD Office. If you need assistance or if you feel you have been unlawfully discriminated against on the basis of disability, you may seek resolution through established grievance policy and procedures. You should contact the Equal Employment Office at 378-5895, D-282 ASB.

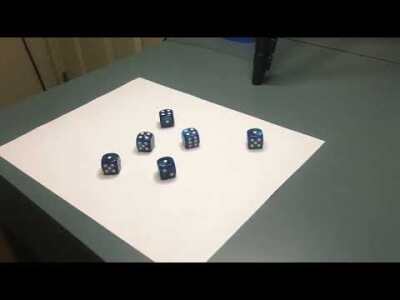

Baseball Catcher Project :

This team project focuses on using a stereo-vision system to estimate the 3-D trajectory of a baseball and control the catcher (x-y stage) to catch it. The baseball delivered by a baseball pitching machine travels at a speed close to 60 mph. The distance between the launcher and the catcher is roughly 40 feet. It takes approximately 300 mSec for the baseball to travel the entire distance. The response and maximum travel time of the catcher is roughly 150 mSec depending on the distacne to travel. Using a pair of Firewire cameras running at 60 frames per second, this vision system has to capture and process 15 to 20 image pairs, estimate the baseball trajectory, and control the catcher to catch the baseball, all in about 150 mSec.

Videos

Old Videos:

Video 13 (2015) (104.1 MB mp4 file, Updated on 10/08/2015)

Video 12 (2014) (32.7 MB mp4 file, Updated on 03/25/2014)

Video 11 (2013) (11.1 MB mp4 file, by John Barrus, Mark Crockett, and David Wheeler, updated on 03/25/2013)

Video 10 (2013) (11.4 MB mp4 file, by Kurtis Cahill, Michael Gardiner, and Nathan Harward, updated on 04/01/2013)

Video 9 (2012) (3.5 MB flv file, by Robert Klaus, Michael Plooster, Laith Sahawneh, updated on 04/11/2012)

Video 8 (2012) (8.7 MB flv file, by Nathan Edwards, Joel Howard, Peter Niedfeldt, updated on 04/11/2012)

Video 7 (2011) (4.0 MB MPEG-4 file, updated on 04/04/2011)

Video 6 (2011) (1.5 MB AVI file, updated on 03/29/2011)

Video 5 (2011) (5.1 MB AVI file, updated on 03/25/2011)

Video 4 (2011) (6.1 MB AVI file, updated on 03/25/2011)

Video 3 (2011) (8.7 MB AVI file, updated on 03/25/2011)

Monster Truck Project:

This project is designed to introduce color image processing theory and simple path planning and their implementations through a fun real-time mobile computer vision project. This team project uses an NVDIA Jetson TX1 board and Intel RealSense camera to control a monster truck to navigate autonomously in an indoor environment. The objectives are to avoid obstacles such as orange cones and stay within the course boundary set by blue foam tubes.

Videos

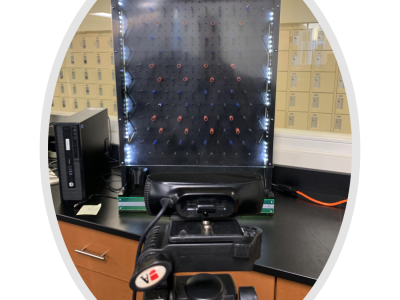

Visual Inspection:

This project is designed to introduce machine vision for visual inspection to students through a simple real-time visual inspection project. Machine vision technology has been used for many industrial factory automation applications for over 30 years. Through this project, students learn to design real-time vision algorithms and to use machine vision technology for visual inspection applications.

Image

Conveyor

Plinko Project:

This project is designed to introduce machine vision application to students through a fun plinko project. Through this project, students learn to perform color segmentation and design real-time robotic vision system. Three color balls drop from the top of the board. A high-resolution camera is used to detect and monitor their locations and predicate their landing spots. The vision system estimates their trajectories and move the cup to catch them.

Plinko Board

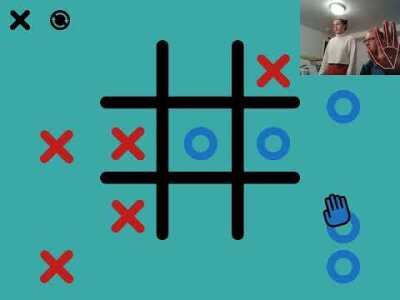

Semester Project:

This is a semester project that requires a demonstration, PowerPoint presentation, and written report. These are project requirements.

– 3-D vision preferrable.

– Camera live input.

– Real-time performance.

– Live feedback.

– Quality of project (10%).

– 15-minute final demonstration and PowerPoint presentation in technical confernece format (5%).

– 4-page final report in a double-column single-spaced technical paper format (5%).

Videos

Winter 2012

Fruit Ninja Demo by Danny Park, Justin Smith, Taylor Waddel, Craig Wilson (flv 4.7 MB )

Fruit Ninja by Danny Park, Justin Smith, Taylor Waddel, Craig Wilson (3gp 21 MB )

Finger Paint Demo by Kyle Browning, Brett Gottula, Joshua Smith (flv 5.6 MB )

Finger Paint by Kyle Browning, Brett Gottula, Joshua Smith (wmv 3.9 MB )

Magic Wand Demo by Robert Klaus, Michael Plooster, Laith Sahawneh (flv 13 MB )

Magic Wand by Robert Klaus, Michael Plooster, Laith Sahawneh (mov 122 MB )

Virtual Chess by Nathan Edwards, Joel Howard, Peter Niedfeldt (flv 4.5 MB )

Face Verification by Peter Chang, Alok Desai, Amin Nazaran (flv 6.0 MB )

Winter 2011

Blaster (wmv 3.9 MB )

License Plate (avi 12.9 MB )

Sign Language (mp4 5.8 MB )

Photo Viewer (wmv 24.7 MB )

Bat Tracker (m4v 8.7 MB )

Winter 2010

Magic Mirror 1 (dv 241 MB)

Magic Mirror 2 (dv 71.4 MB)

Finger Paint (dv 90.9 MB)

Winter 2008

Light Saber (Flash 3.6 MB)

Body Tracker (Flash 3.6 MB)

Helicopter Pose Estimation (avi 25.7 MB)